How and why we built a one-stop shop for deep learning models, pre-trained and optimized for different tasks. Now available as an open-source library.

The AI world has evolved dramatically when it comes to developing models. In the early days of deep learning, it was really tough to run models on GPUs, build networks, debug, and so on. I remember writing code in Lua—so I could train some basic networks on Cifar. But even these were relatively low level and you basically had to build things yourself. Thanks to the open source community, we’re now seeing more high-level frameworks like PyTorch, Keras, and TensorFlow2 for all kinds of models.

You don’t need to reinvent the wheel to build a deep learning application. But, even after you select the best model out there for your task—say from a research paper or open-source repository—you still need to fine-tune it for your data. Experimenting and playing with large amounts of models becomes a real challenge when each one of them is most likely implemented in a different repository, with different code structures, frameworks, and metrics.

This is the challenge we wanted to solve and the idea behind SuperGradients. We’ve been called a ‘powerhouse for building and optimizing deep learning models’ and our goal with SuperGradients is to help deep learning practitioners easily explore different models so they can choose the best one for their application.

When we first established Deci, we began by experimenting with our proprietary Neural Architecture Search (NAS) based optimization technology on a few models. We needed a tool that would help us work more efficiently. Since there was nothing like it out there, we built our own tool to train various types of models and get the best results out of any dataset and neural architecture.

The SuperGradients library for training and reproducing state-of-the-art models grew as we added more models, features, and capabilities. We got better at it, and so did our tool. So good, in fact, that it morphed into an internal product that everyone in the company—and a few outside—wanted to work with. Initially, we offered it only to our customers as part of the commercial offering. Now, we’re ready to share it as an open-source product so everyone can enjoy it. Personally, I’m grateful for the opportunity to give back to the community by sharing solutions that we developed and find so advantageous.

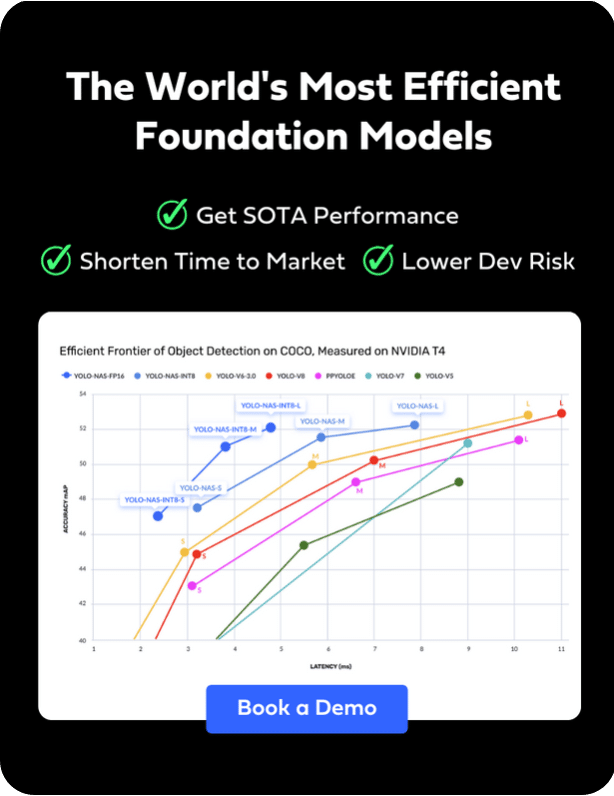

Our vision for SuperGradients is to build the world’s largest model repository, so that anyone can quickly access state-of-the-art models, already tuned and tweaked, and implement them with just a few lines of code. Our talented research and algorithms teams are constantly updating the code with the latest techniques for optimization, ensuring the best results possible. For now, the collection includes computer vision models based on PyTorch covering image classification, object detection, and semantic segmentation.

Dramatically Cut Back the Time Needed to Build Your Model

It’s no secret that implementing a model based on an academic paper can take weeks. Some papers have no code. Some do have code but are poorly written. You might not be able to replicate the results of the paper, or the code may not be suited to your framework or environment. The process ultimately involves a lot of trial and error until you find the code that you need. Now, with the prep work done for you and embedded in the SuperGradients code, you can implement any model with just a few lines of code.

Take the scenario where the model you need comes from a paper released two years ago. Older models didn’t include the best practices available today to improve performance such as weight averaging, advanced data augmentation, tricks for more stable convergence, and so on. When you use a model from the SuperGradients library, it includes all the tricks and best practices from the industry. Based on the metrics we include, SuperGradients models perform even better than the originals, with improved accuracy. As far as we know, this library offers the best possible accuracy for some of the models and the others are still undergoing further improvement with the goal to deliver best-in-class accuracy.

Reduce Uncertainty with Tested Training Recipes

By taking advantage of field-tested recipes for training deep learning models, you can rest assured that the model has been coded in the best possible way. You can check out the list of models supported by SuperGradients and the accuracy that can be achieved in training.

We’re already working on the next steps of our roadmap to add more exciting features that will make life easier for the deep learning community.

Here’s what’s coming next on SuperGradients’ roadmap:

- Many more architectures with SOTA performance

- Advanced features (Dali integration for data augmentation, QAT, Distillation, etc.)

- More Notebooks (fine-tune, using recipes, custom data loaders, etc.)

- Seamless integration with Deci Lab for HW aware runtime optimization

- Integration with other tools (WandB, Comet, SageMaker, Roboflow, MLflow, etc.)

- Training profiling

Don’t take my word for it. Try out the code, share it with colleagues, and send us feedback!