Deci is thrilled to announce the release of a new object detection model, YOLO-NAS – a game-changer in the world of object detection, providing superior real-time object detection capabilities and production-ready performance. Deci’s mission is to provide AI teams with tools to remove development barriers and attain efficient inference performance more quickly.

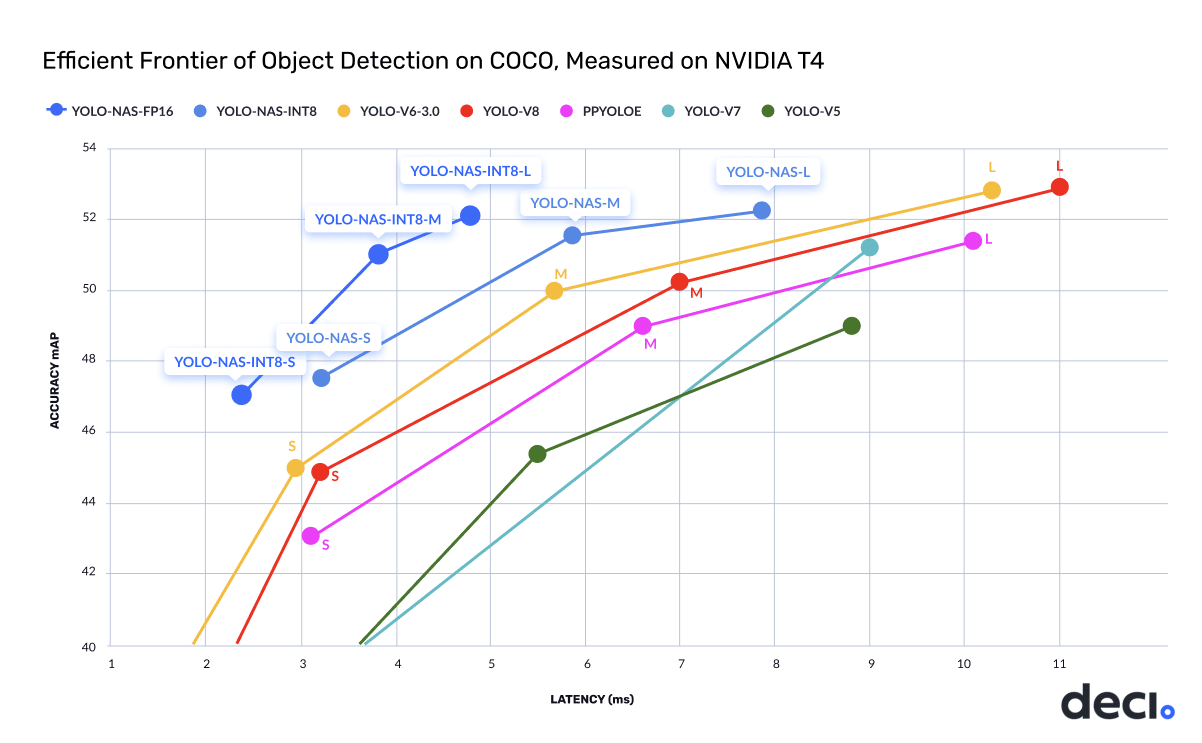

The new YOLO-NAS delivers state-of-the-art (SOTA) performance with unparalleled accuracy-speed performance, outperforming other models such as YOLOv5, YOLOv6, YOLOv7, and YOLOv8.

The world of object detection has undergone significant progress over the past few years, with YOLO models leading the charge. However, limitations and challenges in existing YOLO models have driven the need for continuous innovation.

Existing YOLO models face limitations such as inadequate quantization support and insufficient accuracy-latency tradeoffs. YOLO-NAS addresses these concerns by providing production-ready performance and pushes the boundaries of object detection with superior real-time object detection capabilities. The new model is pre-trained on well-known datasets including COCO, Objects365, and Roboflow 100, making it extremely suitable for downstream Object Detection tasks in production environments.

Deci’s proprietary Neural Architecture Search technology, AutoNAC™, generated the YOLO-NAS model. The AutoNAC™ engine lets you input any task, data characteristics (access to data is not required), inference environment, and performance targets, and then guides you to find the optimal architecture that delivers the best balance between accuracy and inference speed for your specific application. In addition to being data and hardware aware, the AutoNAC engine considers other components in the inference stack, including compilers and quantization.

While Deci AutoNAC generated the best general-purpose YOLO to date, we know that there is no such thing as a “one-size-fits-all” model. Trying to use the same off-the-shelf model for live video stream analysis on edge devices and for detection on cloud GPUs will result in suboptimal performance.

To achieve the best performance possible for your specific use case, the ideal model architecture should take into consideration your data characteristics (image resolution, object size, etc.) as well as the attributes of your inference hardware such as parallelization capabilities, operator efficiencies, and memory cache size.

Deci’s, AutoNAC is a groundbreaking technology that democratizes the use of Neural Architecture Search for every organization and helps teams quickly generate custom, fast, accurate, and efficient deep learning models.

Teams that develop models with AutoNAC achieve better than SOTA inference performance while significantly reducing their dev time and risks.

As demonstrated in the chart below, the YOLO-NAS (m) model delivers a 50% (x1.5) increase in throughput and 1 mAP better accuracy compared to other SOTA YOLO models on the NVIDIA T4 GPU.

The YOLO-NAS model is available under an open-source license with pre-trained weights available for non-commercial use on SuperGradients. With SuperGradients, Deci’s PyTorch-based, open-source computer vision training library, users can train models from scratch or fine-tune existing ones, leveraging advanced built-in training techniques like Distributed Data Parallel, Exponential Moving Average, Automatic Mixed Precision, and Quantization Aware Training.

The SuperGradients library is always up-to-date with the latest advancements in deep learning training. It is user-friendly and well-documented, making it super easy for you to train and deploy the YOLO-NAS or any other computer vision model.

The release of YOLO-NAS delivers a major leap forward in the inference performance and efficiency of object detection models, addressing the limitations of traditional models and offering unprecedented adaptability for diverse tasks and hardware.

We can’t wait for you to try it out and share your feedback with us.

Check out YOLO-NAS technical blog, docs, and example notebooks, and be sure to give us a star on the YOLO-NAS on GitHub.