The new YOLO-NAS delivers state-of-the-art object detection capabilities with unparalleled accuracy, outperforming competing notable models such as YOLOv6, v7 & v8.

TEL AVIV, Israel, May 3, 2023 — Deci, the deep learning company harnessing AI to build AI, today announced the release of YOLO-NAS, its new deep learning model providing superior real-time object detection capabilities and production-ready performance. This foundation model was generated by Deci’s Neural Architecture Search Technology, AutoNAC™, and delivers unparalleled accuracy and speed, outperforming competing models, most notably YOLOv6, YOLOv7 and YOLOv8.

The world of object detection has undergone significant progress over the past few years, with YOLO models leading the charge. However, limitations and challenges in existing YOLO models, such as inadequate quantization support and insufficient accuracy-latency tradeoffs, have driven the need for continuous innovation. Deci’s new YOLO-NAS addresses these concerns by pushing the boundaries of object detection with superior real-time capabilities.

“The release of YOLO-NAS is a major leap forward for inference performance and efficiency of object detection models, addressing the limitations of previous YOLO models and offering unprecedented adaptability for diverse tasks and hardware,” said Yonatan Geifman, CEO and co-founder of Deci.

Deci’s AutoNAC is a groundbreaking technology that democratizes the use of Neural Architecture Search for every organization and helps teams quickly generate custom, fast, accurate and efficient deep learning models. AutoNAC generates best-in-class deep learning model architectures for any task in any environment, delivering the best balance between accuracy and inference speed. In addition to being data and hardware aware, the AutoNAC engine considers other components in the inference stack, including compilers and quantization.

“While Deci AutoNAC generated the best general purpose YOLO version to date, we know that there is no such thing as a “one-size-fits-all” model. Trying to use the same off-the-shelf model for live video streams analysis on edge devices and for detection on cloud GPUs will result in suboptimal performance,” said Prof. Ran El-Yaniv, co-founder and Chief Scientist at Deci.

When it comes to computer vision, the conventional approach falls short when aiming to achieve top performance, and this is particularly notable in edge AI deployments. Ideal neural architectures must meticulously consider the fine details including image resolution and object size, as well as hardware attributes, such as parallelization capabilities, operator efficiencies, and memory cache size.

El-Yaniv further explains, “The devil is in the details, and designing such optimal architectures is an incredibly complex task, often too difficult for humans to tackle alone. Deci’s pioneering AutoNAC engine empowers AI teams to construct state-of-the-art architectures that impeccably align with their applications, delivering unparalleled results.”

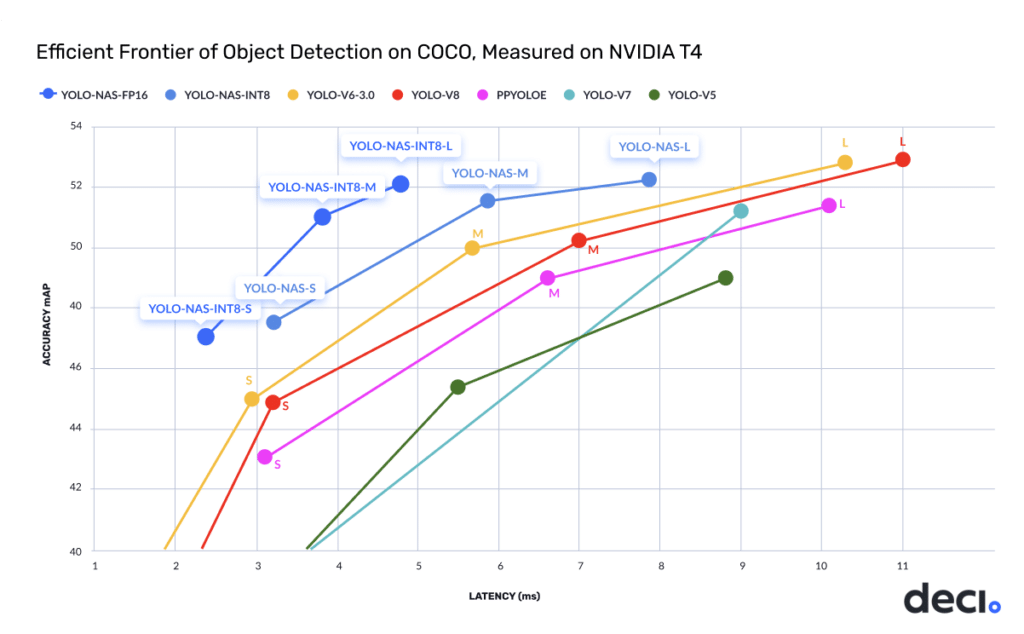

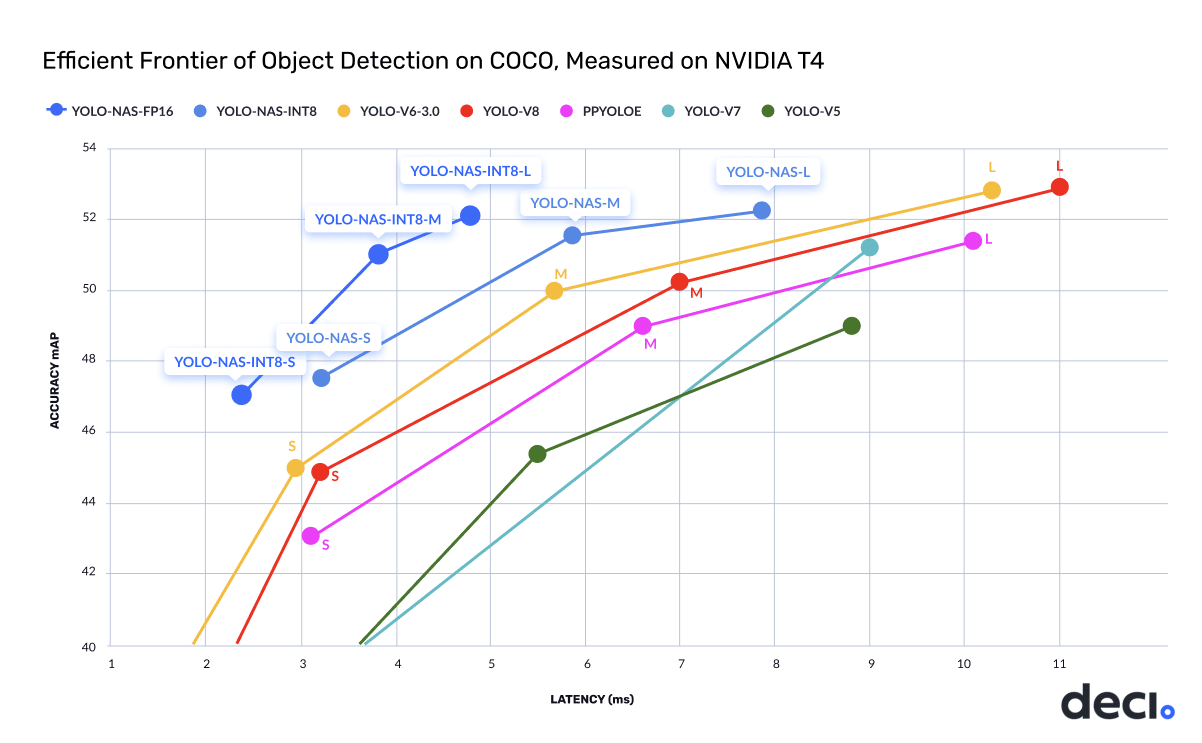

As demonstrated in the chart below, the YOLO-NAS (m) model delivers 50% (x1.5) increase in throughput and 1 mAP better accuracy compared to other SOTA YOLO models on the NVIDIA T4 GPU.

The YOLO-NAS model is pre-trained on well-known datasets including COCO, Objects365, and Roboflow 100, making it extremely suitable for downstream Object Detection tasks in production environments. The new model is available under an open-source license with pre-trained weights available for research use (non-commercial) on SuperGradients, Deci’s PyTorch-based, open-source, computer vision training library. With SuperGradients, users can train models from scratch or fine-tune existing ones, leveraging advanced built-in training techniques like Distributed Data Parallel, Exponential Moving Average, Automatic mixed precision, and Quantization Aware Training.

Deci’s SuperGradients library incorporates the latest advancements in deep learning training. If you would like to train and deploy the YOLO-NAS or any other computer vision model, please go to our GitHub repository.

This announcement was originally published on Cision PRWeb.