Crafting the Next Generation of AI

Artificial intelligence lies at the core of the fourth Industrial Revolution.

Our goal at Deci is to enable more deep learning models to fully perform in production and fulfill their true potential.

We took an innovative approach, using AI itself to craft the next generation of deep learning.

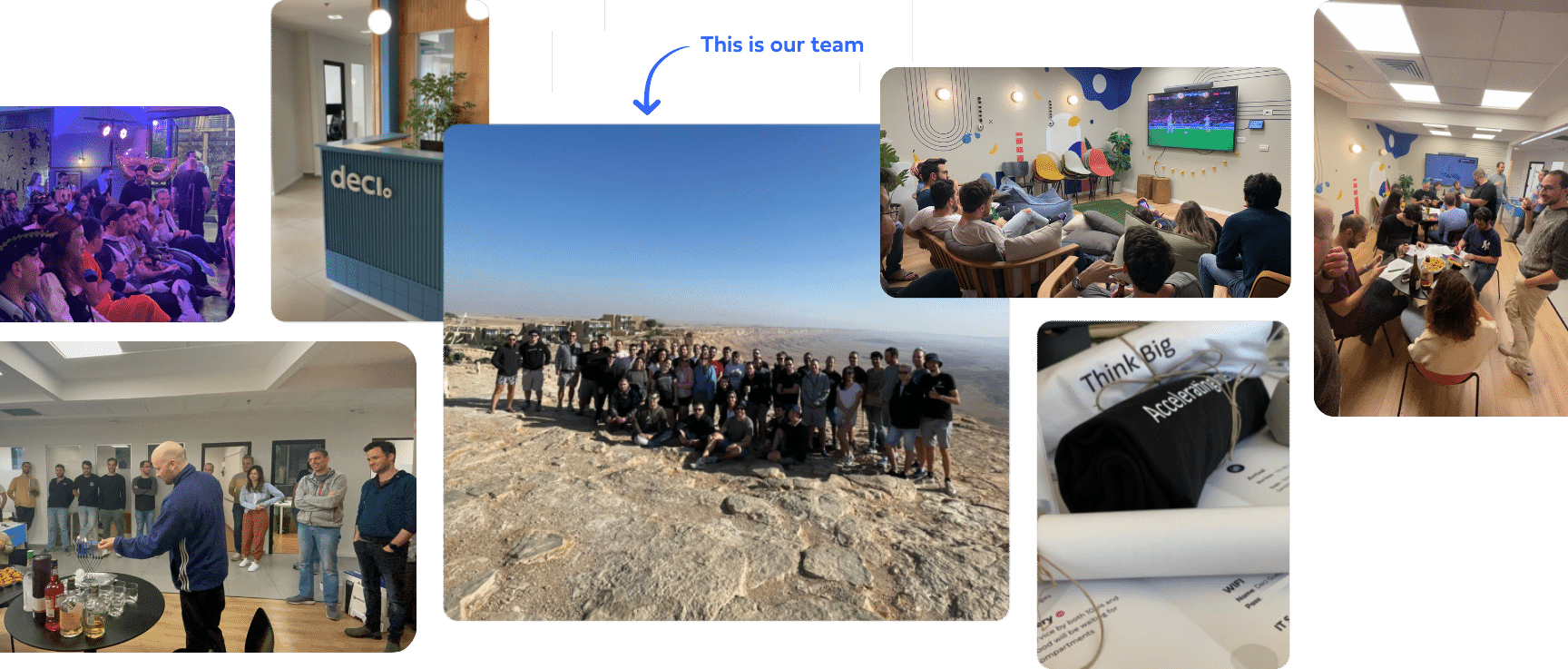

A multi-talented team with a shared passion for technology

Founded in 2019 by world recognized experts in AI, with an innate passion for creative innovation, we forged a talented team of deep learning researchers and engineers.

Deci’s team consists of top AI researcher with advanced academic degrees from prestigious universities, as well as exceptional backgrounds in elite units and leading tech companies.

Our experts are fully dedicated to advancing the state-of-the-art and have co-authored dozens of publications in

top-tier machine learning venues such as NeurIPS, ICML, ICLR, CVPR, and ACL.

Backend Engineer

Deep Learning Engineer

Deep Learning Engineer

Research Scientist

Enterprise Account Executive

Deep Learning Software Engineer

Deep Learning Software Engineer

Director of Sales Development

Deep Learning Product Manager

Deep Learning Research Engineer

Deep Learning Engineer

VP Finance

Deep Learning Research Engineer

Enterprise Account Executive

Deep Learning Engineer

Co-Founder & Chief Scientist

Co-Founder & COO

Content Marketing Manager

VP Sales

Account Executive

Senior Frontend Engineer

Sales Development Representative

Deep Learning Research Engineer

Sales Engineer

Deep Learning Research Engineer

Co-Founder & CEO

Financial Controller

Full Stack Developer

Sales Engineer

Sales Development Representative

Deep Learning Research Engineer

VP Strategy & Business Development

Technical Product Marketing Manager

Deep Learning Engineer

Deep Learning Engineer

Full Stack Engineer

VP People

NLP Research Engineer

Marketing Manager

Deep Learning Software Engineer

Head of AI

Project Manager

NLP Research Engineer

HR Manager

Sales Development Representative

Lead Bookeeper

AI Product Manager

IT System Admin

Deep Learning Research Engineer

Deep Learning Engineer

Deep Learning Research Engineer

Customer Facing Deep Learning Group Manager

DevOps Engineer

DevRel Manager

VP Marketing

Deep Learning Research Scientist

Deep Learning Engineer, Team Lead

Deep Learning Software Engineer

VP Operations & General Manager, US

Director of Strategic Partnership

Employee Experience & Office Manager

Research Scientist

Deep Learning Research Engineer

Technical Product Manager

Research Scientist

Deep Learning Software Engineer

VP Engineering

Deci is ISO 27001

Certified

from transformers import AutoFeatureExtractor, AutoModelForImageClassification

extractor = AutoFeatureExtractor.from_pretrained("microsoft/resnet-50")

model = AutoModelForImageClassification.from_pretrained("microsoft/resnet-50")