In recent years, vision transformers have gained considerable attention for their remarkable capacity to capture global dependencies and effectively handle inputs of varying sizes with their unique architecture based on self-attention mechanisms. Despite the promising potential, widespread adoption of vision transformers is hindered, especially on edge devices, due to various factors, including the slow inference performance, high computational demands, and large memory footprint of these models.

It’s time to redefine what’s possible!

We are thrilled to introduce Deci’s AutoNAC engine support for vision transformers, marking a significant leap forward in optimizing the performance and efficiency of these cutting-edge models. The support provided by Deci’s AutoNAC engine unlocks new possibilities for vision transformers across a myriad of applications. With enhanced performance and efficiency, vision transformers can now be deployed more effectively in real-world scenarios that demand both highly accurate and fast image processing.

Democratizing the Power of NAS for Vision Transformers

Vision transformers’ attention mechanism allows the model to capture global dependencies and long-range interactions between image patches, enabling a holistic understanding of the image. As a result, vision transformers demonstrate exceptional performance in tasks requiring fine-grained detail and context comprehension. However, due to their reliance on self-attention and sequential processing, vision transformers have larger parameter sizes and tend to be computationally more intensive than parallelizable CNNs, resulting in slower inference times. Consequently, the use of traditional NAS for designing vision transformers has been limited due to the long search time and compute-intensive operations that the traditional search requires.

Deci’s highly efficient AutoNAC engine is now extended to include in its search space transformer blocks – architectural components used by vision transformer models such as ViT, SegFormer, and others. This capability empowers users to discover efficient vision transformer architectures without going through the painstaking task of manual exploration; With AutoNAC, you efficiently explore and discover optimal neural architectures for vision tasks and achieve higher accuracy and performance in just a few days.

In addition to optimizing the convolutional layers, AutoNAC for vision transformers also considers the structure of the self-attention layers, positional encodings, number of attention heads, layer sizes, and other transformer-specific hyperparameters.

Boosting Transformer Efficiency with AutoNAC: Faster and More Accurate Models

With its expanded capabilities, AutoNAC has taken another significant stride forward, now producing remarkably efficient and high-performing transformer models designed for both Natural Language Processing (NLP) and computer vision tasks.

Deci has already set a benchmark in the MLPerf competition with its AutoNAC-generated DeciBERT architecture. This innovative model outshone the competition in the BERT 99.9 category, setting new standards for accuracy and throughput. Building on this success, AutoNAC’s power has now been harnessed to create an exceptional transformer-based model for semantic segmentation.

Enhanced Accuracy and Superior Throughput for SegFormer

A large company in the public sector, developing an autonomous navigation platform running on edge devices, was looking to improve its product performance.

The Problem: Lower than Desired Throughput

The team implemented vision transformers to support highly accurate semantic segmentation capabilities within one of their products. Vision transformers’ ability to understand global context allowed them to generate high-quality segmentations, capturing intricate object boundaries and improving the overall accuracy of the task. Their model of choice was SegFormer, a semantic segmentation framework that unifies Transformers with lightweight multilayer perceptron (MLP) decoders. While the model reached the target accuracy, the team couldn’t deliver the required throughput to improve the product and support its advanced and differentiated features.

The Solution: AutoNAC-generated architecture

By applying Deci’s AutoNAC engine, the company generated a new transformer-based architecture specifically designed to perform well on the NVIDIA Jetson Orin NX device. The new vision transformer model generated with AutoNAC is 3.33x faster and +0.8 more accurate than the original SegFormer model. Once the new-and-improved transformer-based architecture was generated, the customer trained it with SuperGradients, Deci’s open-source training library for computer vision models.

Business Impact: Expedited Market Entry and Superior Product Quality

In the realm of autonomous navigation, the speed and accuracy of inference on edge devices play pivotal roles in the successful deployment of a product. With faster inference speeds, the platform can swiftly process real-time data, enabling quicker decision-making and response times. The heightened accuracy of the model ensures more reliable navigation in complex environments. Attempting to reach such high accuracy and inference speed manually is a time-consuming and risky process, that would have delayed crucial improvements in the product. By harnessing Deci’s technology, the company was able to quickly deliver a superior product and offer customers a heightened level of performance, efficiency, and confidence.

“Using Deci, we were able to reach our performance targets for production and successfully launch our autonomous navigation. What we originally planned to do in 8 months, we were able to accomplish in no more than 3 weeks.”

CTO at a large company in the public sector

With its optimization capabilities extended to support vision transformers, the AutoNAC engine unlocks unparalleled performance for computer vision bridging the gap between theoretical advancements and practical deployment.

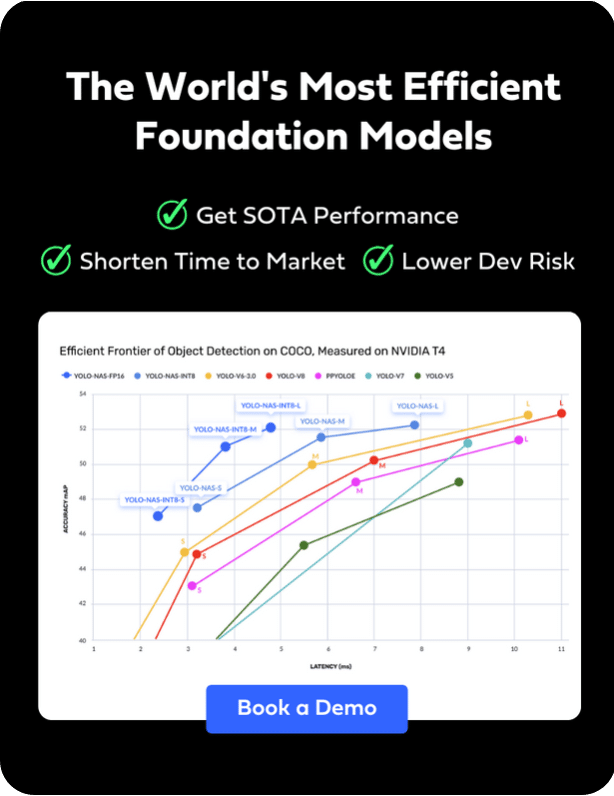

To learn more about Deci’s platform and its AutoNAC engine, book a demo today.