Over the last few years, we’ve witnessed how quickly AI, particularly deep learning, has transformed business and impacted our society. However, this rapid advancement has brought with it a new set of challenges: hardware constraints, poor inference performance, high operational costs, and long development cycles.

With these limitations, it’s never been easy to maximize performance and efficiency, while maintaining the model’s original accuracy. Until now, most organizations haven’t achieved real value from AI.

Today, we’re excited to open access to Deci’s Deep Learning Platform through our website. We built it to help data scientists optimize and scale models that become effective production-grade solutions on any hardware.

Accelerating the productization of deep learning models, the platform combines multiple algorithmic and software techniques in an automatic and managed manner. It provides an automatic inference optimization of trained deep learning models. Users can achieve up to 5X inference acceleration in just a few steps—without compromising the model’s original accuracy.

Simply put, Deci’s platform helps data scientists deliver better and production-grade deep learning models faster than ever, with substantial performance boost and reduced cost-to-serve.

The Challenges of AI and Deep Learning

AI is one of the most promising new technologies shaping the future. However, it has to overcome major hurdles for it to continuously generate value. According to an IDC report, 50% of AI projects fail for 1 in 4 companies.

Deep learning, a subset of AI, is what powers more advanced use cases such as computer vision and language processing. Its mainstream adoption is hampered by high algorithmic complexity and computational demands. Cited by WIRED, Song Han, an assistant professor at MIT who specializes in efficient deep learning computing says, “Deep neural networks are very computationally expensive. This is a critical issue.”

In short, data scientists building and running deep learning models face longer development cycles, poor inference performance, ineffective hardware, costly operations, and business fit constraints. For AI to progress, reaching top performance and efficiency should be easy and accessible.

Everything You Need to Know About Deci’s Deep Learning Platform

Given the current state of AI, we wanted to create a platform that will help data scientists deliver production-grade deep learning models faster than ever, with better performance and reduced cost-to-serve. We’re happy to announce that it’s finally available. You can now access Deci’s Deep Learning Platform through our website.

We built something that we think the entire world, and more specifically the data science community, can benefit from. We removed any hurdles and opened it for free, with no strings attached. Sign Up for Free.

Platform Benefits and How It Works

The main benefit of using Deci’s Deep Learning Platform is the automatic inference optimization of trained deep learning models. You can achieve up to 5X inference acceleration (throughput/latency) in a few easy steps—without compromising accuracy.

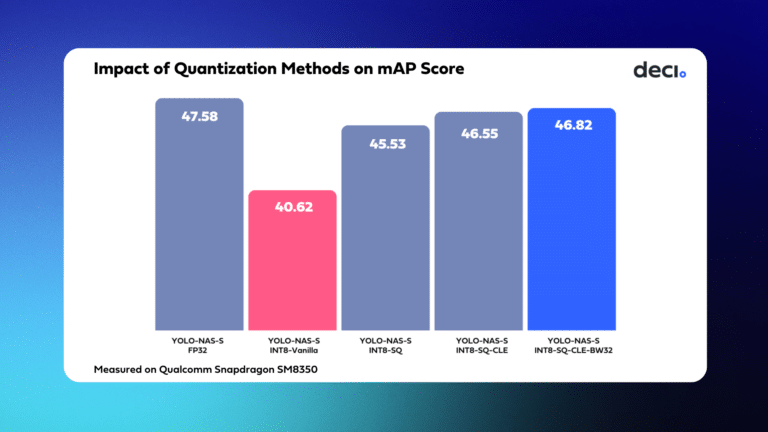

The technology behind the optimization combines multiple algorithmic and software techniques in an automatic and managed matter. This includes quantization and graph compilation. Our premium tier offers more advanced optimization based on Deci’s AutoNAC technology.

5 Steps from Log-in to Model Serving in Production

You can optimize your model’s inference performance in just a few clicks, and get the results within minutes, not weeks. Simply follow these steps:

- Upload a trained model or use our example model (ResNet-50 trained over ImageNet).

- Benchmark your inference performance.

- Optimize your model for a given hardware and inference scenario.

- Compare the optimized model to the original model to see the boost.

- Deploy to a containerized inference engine to run and test these models in your staging and then production environments.

A Walkthrough of More Features and Technical Compatibility

Our deep learning platform serves multiple deep learning model frameworks and deployment options. We will be adding more features in the future.

- Currently compatible with models trained in ONNX, TensorFlow, Keras, and TorchScript frameworks. PyTorch will be available soon.

- Inference optimization is available for Nvidia T4, V100, K80 GPUs, and Intel CPUs.

- The platform is accessible through a friendly web interface with step-by-step instructions or via API.

- It is also highly secured, complying with high security standards such as ISO27001 and ISO27799.

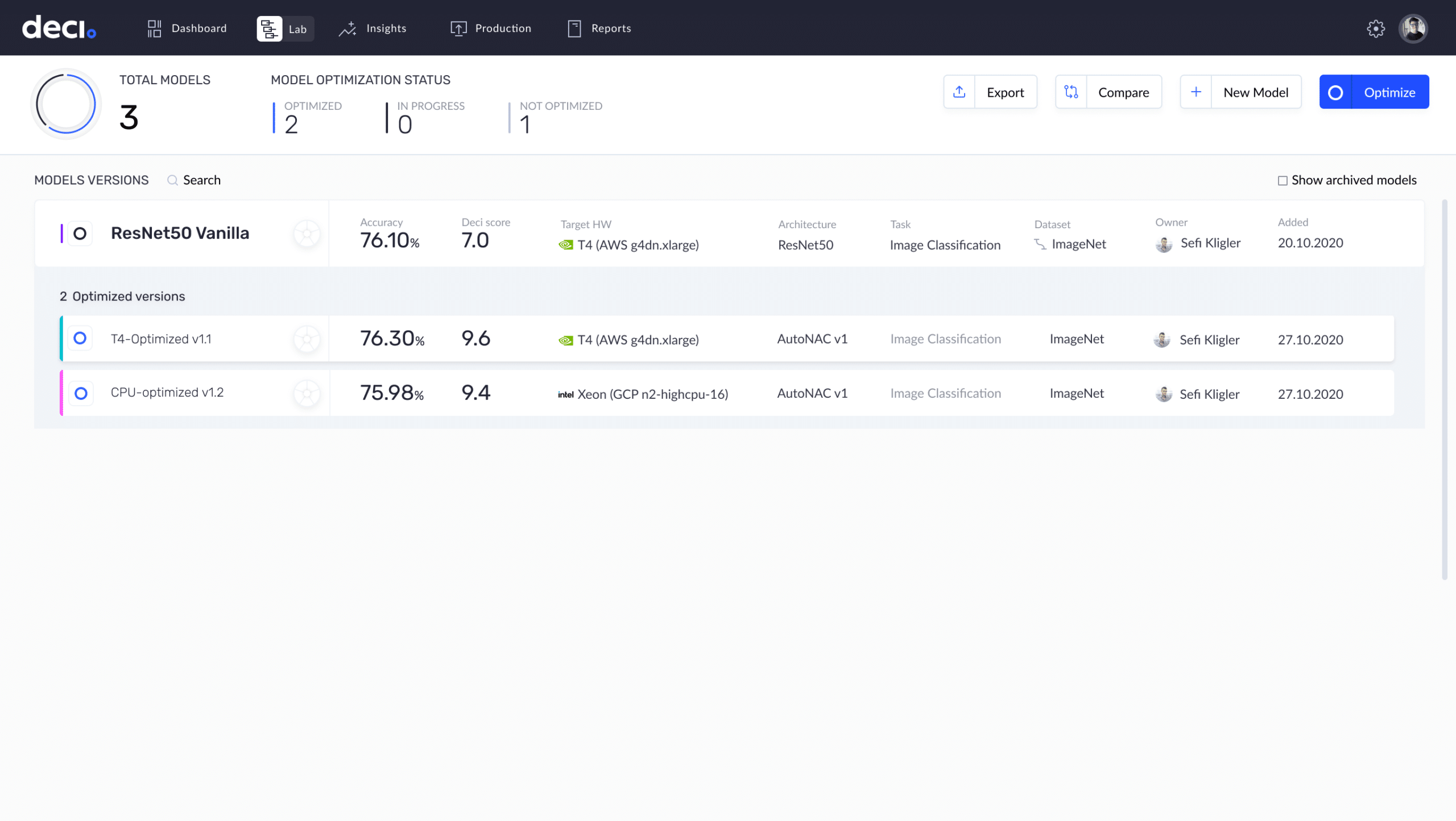

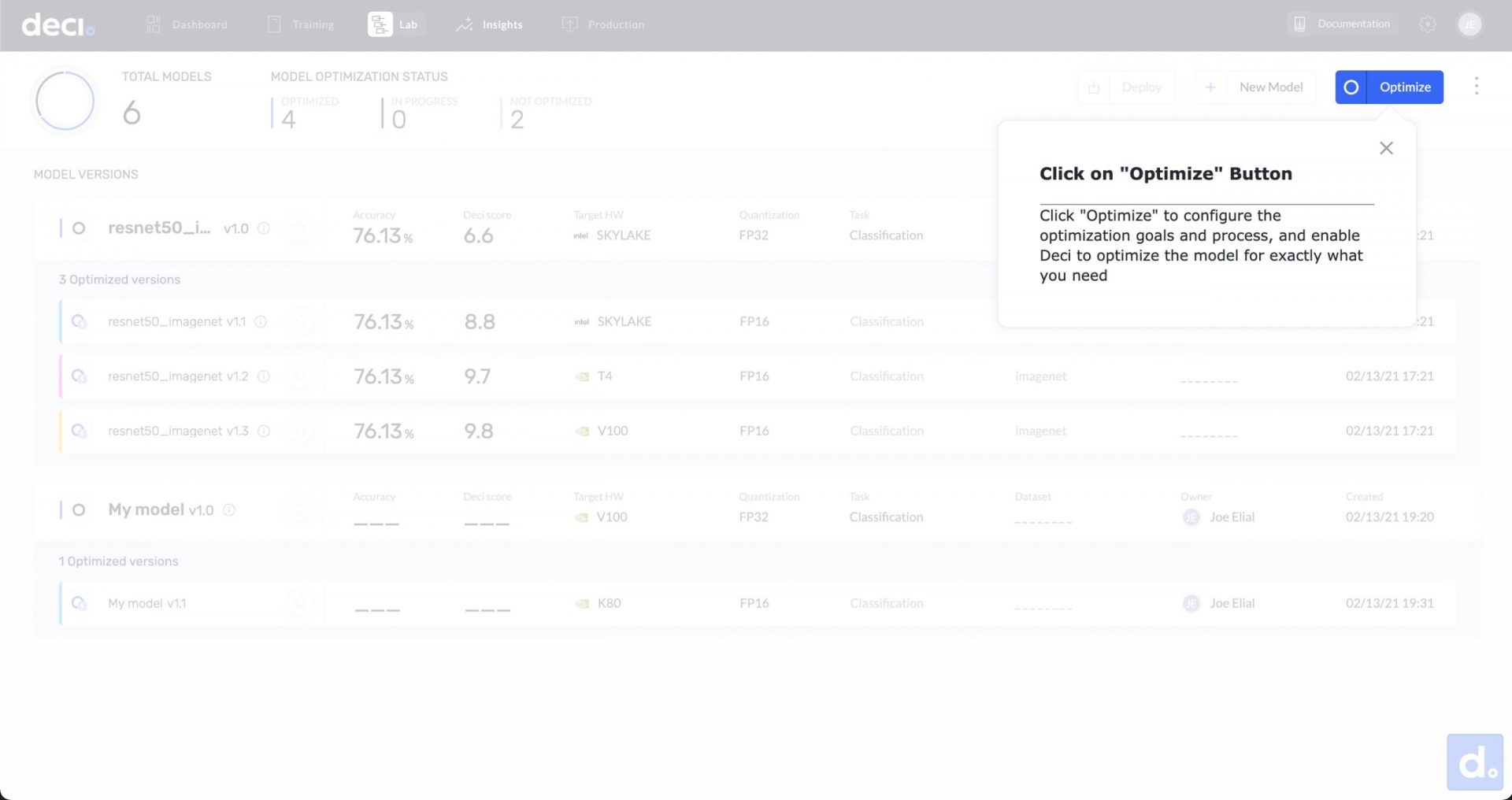

Lab Screen

The lab screen enables users to manage deep learning models’ lifecycles, optimize inference performance, choose the best candidate for production, and deploy models.

- To get started, you can upload your model or quickly start with the pre-loaded ResNet-50 model that has been optimized for CPU or GPU.

- Data is not needed for the optimization. It also doesn’t affect the accuracy (only a minor statistical error).

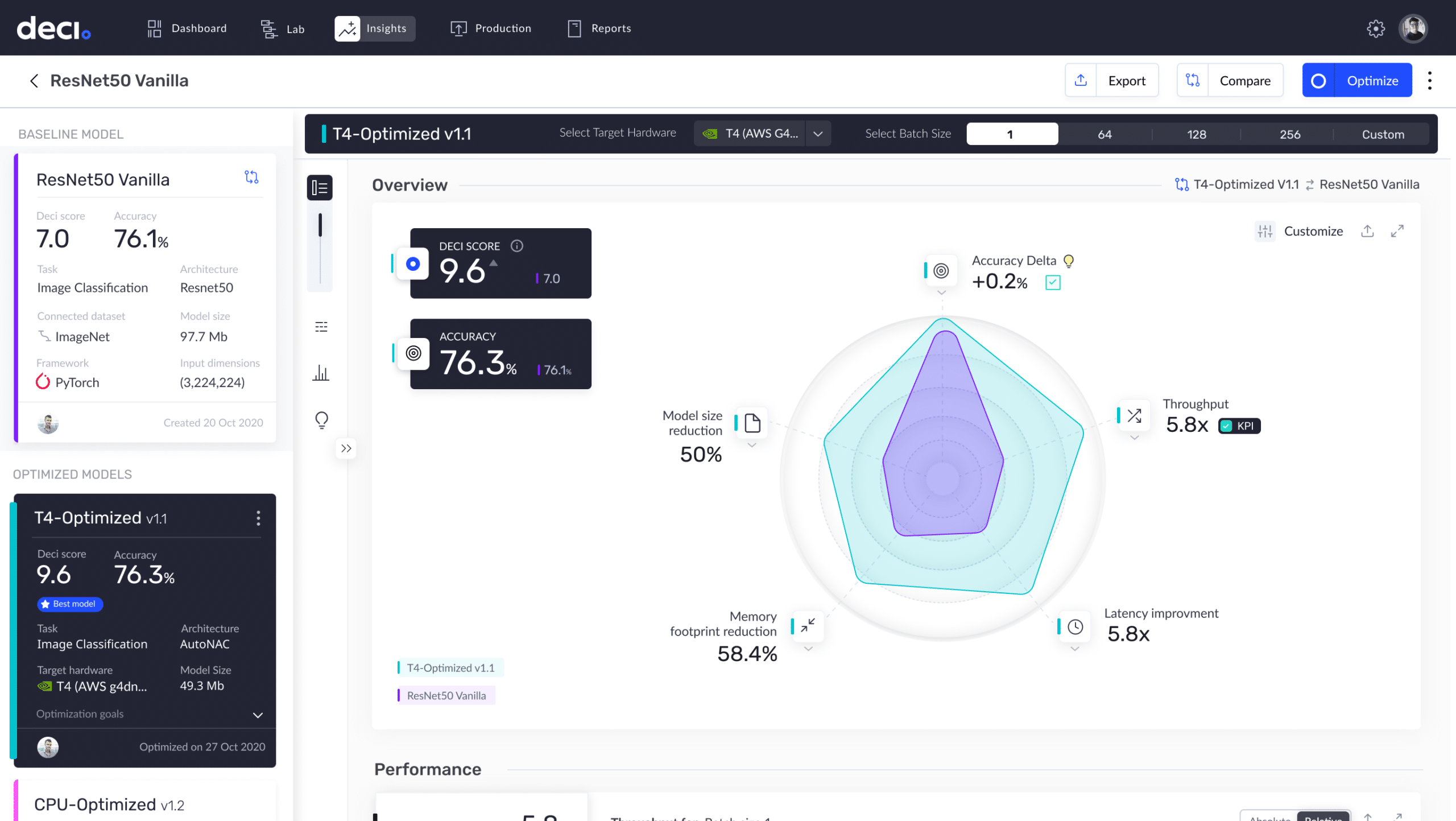

Insights Screen

The insights screen allows users to explore the capabilities of the model and more specifically, benchmark between models and hardware. One cool feature is the ability to test the model on several batch sizes to select the optimal one for production.

- You can aggregate all the indicators for the deep learning model’s performance.

- The screen features the Deci Score, a single metric that encapsulates your model’s overall performance.

- For a deeper dive, various metrics offer an in-depth look at the model’s expected behavior in production and its performance gain. The cost reduction achieved by Deci’s platform will be available soon.

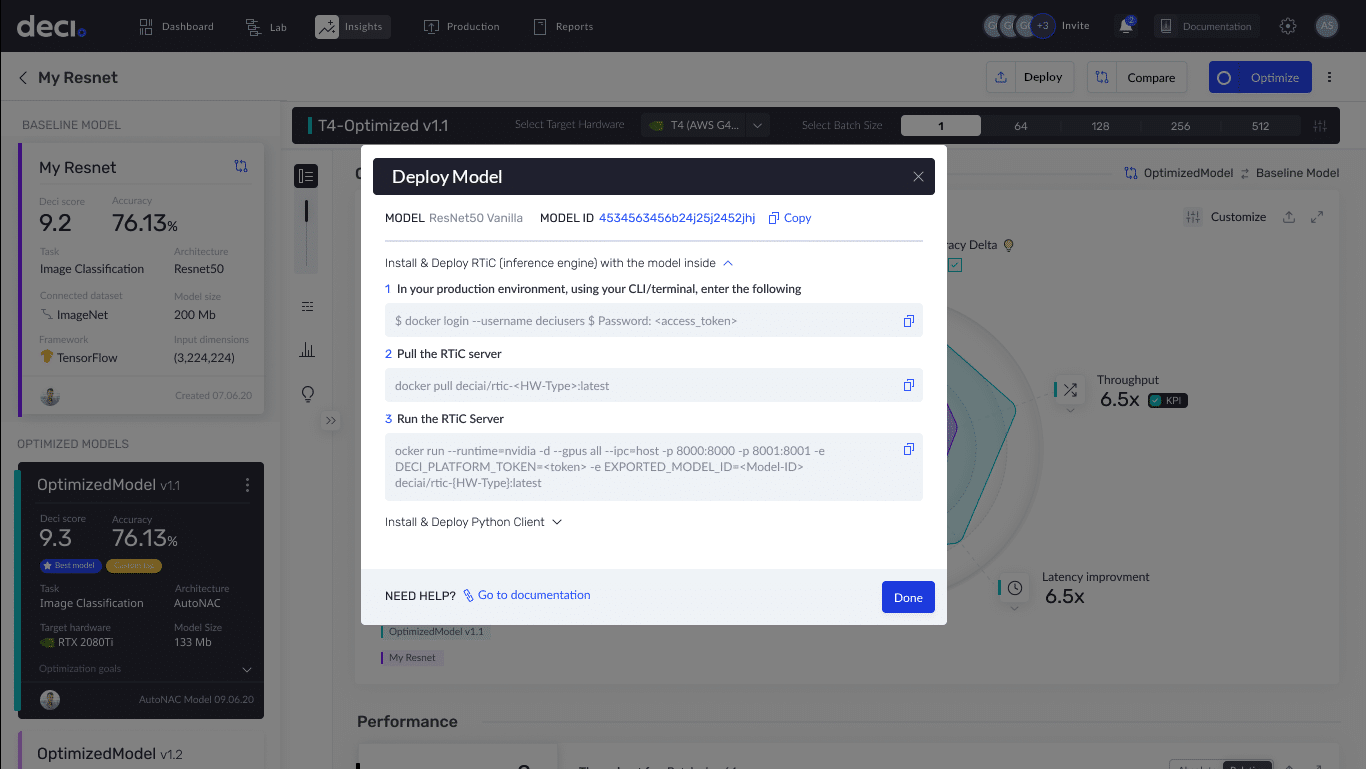

Deployment Options

- The current deployment option is available for CPU or GPU environments that can run containers. This means mainly cloud, data center, and on-prem environments. We will soon add support for non-containerized environments such as edge and mobile.

- Deployment and serving are done seamlessly through Deci’s Runtime Inference Container (RTiC), which is a Docker container that pulls and seamlessly serves the models from the platform. The RTiC is accessible through our Python client with full documentation. Integrate the RTiC into your application easily using a standard and fully documented API. Click here to learn about RTiC.

Optimizing AI Models Using AI is the Future

To create and deploy effective AI solutions, organizations, data scientists, and deep learning engineers need tools that can help overcome the increasing complexity and diversity of neural network models.

We realized that the most effective and optimal way to solve this challenge is to leverage AI itself. This is what we want to accomplish with our technology, and we’re just getting started.

Deci’s Deep Learning Platform is well documented. To find out more, you can read our documentation here. Signing up to the platform is free. With just a valid email address, you can instantly accelerate your inference speed by up 5X without compromising accuracy.

To the data science community, we’d appreciate it if you can share any thoughts and feedback. We look forward to adding more cool features that will be valuable to you.