In this blog, we walk you through how to convert a model from PyTorch to CoreML using the coremltools package.

The world of machine learning continues to expand to new applications and use cases. Efforts to reduce the model size, memory footprint, and power consumption are not only making it more accessible but also enabling it to be deployed on various environments – from expensive GPUs to edge devices. To transfer a deep learning model from a GPU to other devices, particularly those at the edge, deep learning frameworks are essential.

One of the most popular frameworks is Apple’s Core ML, which is a foundation framework for doing on-device inference. Core ML brings machine learning models to iOS applications on all end-user devices, and builds and trains these models for various tasks, including:

- Natural language processing

- Speech processing

- Sound analysis

- Computer vision

Additionally, you can train models using AI libraries like PyTorch and TensorFlow and then convert them to the Core ML format using the Core ML Tools (coremltools) module.

Many AI developers can get stuck converting models between various frameworks. This can cause an enormous headache and inhibit the ability of developers to transfer models across different hardware.

If that is something that you have experienced, then read on. We’ll discuss how model conversion can enable machine learning on various hardware and devices, and give you specific guidelines for how to easily convert your PyTorch models to Core ML using the coremltools package. At the end of this blog, you will feel ready and confident to convert your PyTorch model to Core ML.

Why Convert Your Models from PyTorch to CoreML?

Just six years ago, machine learning training and deployment were restricted to large-scale high-performance servers. Today, machine learning is commoditized, making it accessible everywhere, including comparatively low-resource devices such as mobile phones.

Machine learning researchers and practitioners have made progress on this front by optimizing both the hardware and software required to deploy and execute machine learning techniques.

Apple has optimized its silicon hardware by introducing powerful CPUs, GPUs, and Neural Engine (ANE) – Apple’s neural processing unit (NPU). These processing components are embedded in Apple’s proprietary chip.

- Apple’s CPUs leverage the BNNS (Basic Neural Network Subroutines) framework which optimizes neural network training and inference on the CPU.

- The GPUs use Metal Performance Shaders (MPS) framework to achieve optimal neural network performance.

- And the ANE is like a GPU, but it is specifically designed to accelerate neural network operations such as matrix multiplies and convolutions. With 16-core ANE hardware, Apple achieves a peak throughput of 15.8 teraflops on iPhone 13 Pro using A15 Bionic chip, reaching a significantly higher processing power than previous devices.

However, to leverage Apple’s powerful hardware capabilities, your model must be converted to Core ML format. The Core ML library fully utilizes Apple’s hardware to optimize on-device performance. Core ML models can leverage CPU, GPU, or ANE functionalities at runtime. It can also split the model to run different sections on different processors.

Core ML supports a number of libraries from which ML models can be converted (to be discussed in the next section). Furthermore, once the model is deployed on the user’s device, it does not need a network connection to execute, which enhances user data privacy and application responsiveness.

Let’s now discuss the components of the coremltools module, which is used for model conversion.

Understanding the Core ML Tools Module – coremltools

The coremltools is a Python package that primarily provides a Unified Conversion API to convert AI models from third-party frameworks and packages like PyTorch, TensorFlow, and more to the Core ML model format.

In addition to model format conversion, the coremltools package is useful for reading, writing, and optimizing Core ML models. It has certain utilities to compress neural network weights and reduce the space it occupies.

AI practitioners can convert trained deep learning models to Core ML from the following libraries:

- PyTorch for deep learning models

- TensorFlow 1 & TensorFlow 2 for deep learning models, including Keras API

- SuperGradients for deep learning models

A typical conversion process involves loading the model, performing model tracing (discussed below) to infer its type, and using the convert() method of Unified Conversion API to obtain an MLModel object – which is the format for Core ML models. After conversion, you can integrate the Core ML model into your iOS application using Xcode and run predictions.

Developers can customize Core ML models to a certain extent by leveraging the MLModel class, NeuralNetworkBuilder class, and the Pipeline package. Let’s discuss this further in the next section.

Core ML’s MLModel & spec Object

An MLModel object encapsulates all of the Core ML model’s methods and configurations. Once the model is converted to Core ML format, developers can load it using MLModel to modify the model’s input and output descriptions, update the model’s metadata (like the author, license, and version), and run inference on-device.

The Core ML model has a spec object which can be used to print and/or modify the model’s input and output description, check MLModel’s type (like a neural network, regressor, or support vector), save the MLModel, and convert/compile it in a single step.

Core ML’s NeuralNetworkBuilder

Once a model is converted to the Core ML format, developers can personalize it using NeuralNetworkBuilder. The NeuralNetworkBuilder can inspect the model layers using the spec object and view and/or modify the input features to extract their type and shape.

Using the neural network’s spec object, developers can further update the input and output descriptions and metadata of the MLModel. Moreover, the model’s layers, loss, and optimizer can be made updatable.

The Core ML Pipeline

A pipeline consists of one or more models, such as a classifier or regressor. It can also include other pre-processing steps, such as embedding or feature extraction, and post-processing such as non-maximum suppression. Using the coremltools, developers can build an updatable pipeline model by leveraging the spec object of an MLModel.

How to Convert From PyTorch to CoreML

Converting a deep learning model from PyTorch to a Core ML model is quite easy. Typically, there are two methods used for this conversion:

- Direct conversion from PyTorch to CoreML model

- Conversion of PyTorch model to CoreML via ONNX format

Let’s discuss these methods below:

Option 1: Convert Directly From PyTorch to Core ML

As of coremltools version 4.0, developers can directly convert PyTorch models to Core ML without having to first save them in the ONNX (Open Neural Network eXchange) format. The coremltools module uses the Unified Conversion API to perform this conversion. Keep in mind that this method is recommended for iOS 13, macOS 10.15, watchOS 6, tvOS 13, or newer deployment targets. Older deployments can be performed using the second method.

1. Extract PyTorch Model’s TorchScript Representation Using JIT Tracer

The first step is to generate a TorchScript version of the PyTorch model. This is a way to create optimizable and serializable models using PyTorch code. TorchScript representation can be obtained using PyTorch’s JIT tracer.

Model tracing determines all the operations that are executed when a model parses input data through its linear layers. PyTorch.JIT.Trace runs a sample input tensor via the trained PyTorch model to capture its relevant operations. The input tensor can be taken from training or validation data, or it can be a random tensor. A sample or random input tensor required by PyTorch.JIT.Trace looks like this:

sampleinput = torch.rand(1, 3, 224, 224)

Sample PyTorch JIT tracing code for the model is shown in the following code snippet below. It imports the torch library and loads a pre-trained MobileNetV2 model from the torchvision model repository. Then the trained model is passed through the random input tensor to obtain the model trace using the torch.jit.trace() method.

The output of this method is a traced model that we’ll use in the next step.

import torch import torchvision # Load a pre-trained version of MobileNetV2 torch_model = torchvision.models.mobilenet_v2(pretrained=True) # Set the model in evaluation mode. torch_model.eval() # Trace the model with random data. example_input = torch.rand(1, 3, 224, 224) traced_model = torch.jit.trace(torch_model, example_input) out = traced_model(example_input)

2. Convert the Traced PyTorch Model to Core ML Model

Finally, the traced model can be converted to the Core ML model using the Unified Conversion API’s convert() method.

The following code snippet shows the final conversion. The convert() method primarily takes two arguments: the traced model and the desired input type for the converted model. The input type can be one of two types: TensorType or ImageType.

We are using TensorType in this conversion. Additionally, developers can use the third argument: convert_to=”mlprogram” to save the model in Core ML model package format, which stores the model’s metadata, architecture, weights, and learned parameters in separate files. Here, we’ll leave out this parameter to save it as an MLModel file (.mlmodel binary file format), which is the default setting.

import coremltools as ct

# Using image_input in the inputs parameter:

# Convert to Core ML program using the Unified Conversion API.

model = ct.convert(

traced_model,

inputs=[ct.TensorType(shape=example_input.shape)]

)

# Save the converted model.

model.save("newmodel.mlmodel")

# Or uncomment the line below to save the Core ML model as a .mlpackage file

# model.save("newmodel.mlpackage")

Option 2: Convert from PyTorch to CoreML via ONNX Format

If direct conversion from the PyTorch to CoreML is not supported due to older platform deployment, you can first convert your PyTorch model to ONNX format and then convert it to Core ML.

This approach is more common as ONNX is an open format industry standard that offers more flexibility to move your models between different frameworks.

1. Convert Model from PyTorch to ONNX

PyTorch supports ONNX format conversion by default. The code snippet below shows the conversion process.

Load a pre-trained model, define a sample input tensor to run tracing, and finally, use the torch.onnx.export() method to object the model in ONNX format.

import torch

import torchvision

# Load a pre-trained version of MobileNetV2

torch_model = torchvision.models.mobilenet_v2(pretrained=True)

# Set the model in evaluation mode.

torch_model.eval()

# Trace the model with random data.

example_input = torch.rand(1, 3, 224, 224)

# Define the input and output names for the ONNX model.

input_names = [ "actual_input" ]

output_names = [ "output" ]

# Export our model to ONNX format

# Set your desired model name

onnx_filename = model_name + ".onnx"

torch.onnx.export(

torch_model,

example_input,

onnx_filename,

verbose=False,

input_names=input_names,

output_names=output_names,

export_params=True,

)

2. Convert Model from ONNX to Core ML

Core ML provides an ONNX converter. However, it will be deprecated in the upcoming version of the coremltools framework. For PyTorch models, Core ML recommends directly using the PyTorch converter discussed above.

The code snippet below converts the ONNX Model to Core ML format:

import coremltools as ct # Convert from ONNX to Core ML model = ct.converters.onnx.convert(model=onnx_filename)

Challenges When Converting a Model From PyTorch to CoreML

One major challenge when converting a model from PyTorch to CoreML is obtaining the TorchScript representation. If the PyTorch model uses a data-dependent control flow such as conditional statements or loops, then model tracing would prove inadequate. Tracing cannot generalize the representations for all control paths.

For instance, consider a model where its convolutional layer is executed inside a loop to cater to different data inputs. Each data input would result in a different output. When a tracer is executed using a sample input, it will only cover one path of the model whereas another sample input would cover another path. In this way, one model would have more than one trace, which is not ideal for model conversion.

In this case, developers can use model scripting or a combination of tracing and scripting to obtain the required TorchScript representation. Model scripting uses PyTorch’s JIT scripter. However, the support for model scripting in coremltools is currently experimental.

By manually scripting the model’s control flow, developers can capture its entire structure. They can apply scripting to the entire model or just a part of it, in which case a mix of tracing and scripting would be ideal. The code statement below demonstrates the method to apply JIT scripting to a model that has passed through the manual control flow capture script.

scripted_model = torch.jit.script(model)

Another potential challenge is operations that are not supported. For example, torchvision.ops.nms is not supported out-of-the-box, and should be added as postprocessing in the Core ML model builder itself.

Alternatives to CoreML

One of the major alternatives to Core ML is TensorFlow Lite which offers machine learning for mobile, microcontrollers, and edge devices. Developers can pick pre-trained TensorFlow models, convert them into TensorFlow lite format (.tflite), and deploy them on the platform of their choice. It provides extensive support for iOS deployment as well, including ML applications (but not limited to), such as:

- Image classification

- Object detection

- Pose estimation

- Speech recognition

- Gesture recognition

- Segmentation

- Natural language question answering

- Style transfer

- Audio classification

To perform any ML task on iOS, TensorFlow offers support for Swift and Objective-C programming languages, which enables on-device machine learning with low latency, smaller model size, hardware compatibility, and fast performance.

You should now feel confident to engage in the process of converting your PyTorch models to CoreML. If you are interested in converting PyTorch models to other frameworks, you can check out our blogs on converting PyTorch to ONNX or to TensorRT.

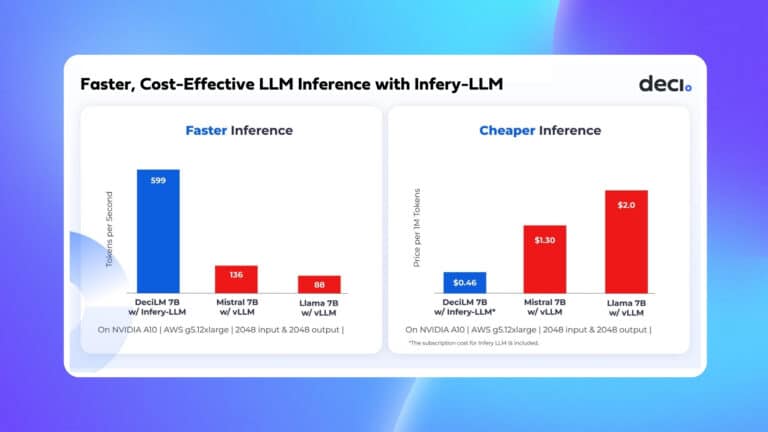

Now it’s time to take your model to the next level. Head to the Deci deep learning development platform and get started with optimizing your model accuracy and inference performance. The Deci platform currently supports the ONNX, PyTorch, TensorFlow (TF2 saved-model), and Keras (h5 format) frameworks.

To start optimizing your model, click on this link to read more and talk to an expert.